News

ZIP Codes: The Simple Fix For Advertising ROI Measurement

(As published in AdExchanger.)

By Rick Bruner, CEO, Central Control

One of the hottest trends in advertising effectiveness measurement, especially with privacy concerns killing user-level online tracking, is geographic incrementality experiments. These experiments are cost-effective, straightforward and reliable, if done right.

Geo media experiments typically use large marketing areas, such as Nielsen’s Designated Market Areas (DMAs). Unlike traditional matched market testing, this modern approach involves randomizing DMAs, ideally all 210, into test and control groups. This way, advertisers with first-party data can measure true sales lift in house without external services. For those lacking in-house sales data, third-party panels, such as those from Circana and NielsenIQ, offer alternatives compatible with this kind of test design.

High-quality, randomized controlled trials (RCTs) – akin to clinical trials in medicine – are the best source of evidence of cause-and-effect relationships, including advertising’s impact on sales.

Statistical models, including synthetic users, artificial intelligence, machine learning, attribution, all manner of quasi-experiments and other observational methods are faster, more expensive and less transparent forms of correlation – not measurement of causation. They may be effective for audience targeting, but they are not for quantifying ROI.

Imagine, however, the potential for conducting geo experiments using ZIP codes instead of DMAs.

Targeting with ZIP codes

An advantage to DMAs is that they are universally compatible with all media types. ZIP codes, on the other hand, pose challenges to experiments in digital media. Targeting with ZIP codes online often relies on inference from IP addresses, which is unreliable and increasingly privacy-challenged. Geo-location signals from mobile devices also contribute ZIP codes to user profiles, which is bad for experiments, as a single device/account can be tagged with multiple ZIP codes based on where the user has recently visited.

A key to the reliability of this kind of geo experiment is ensuring that the ZIP codes used for randomized media exposures match the ZIP codes where audience members receive their bills, as recorded in company CRM databases. Each device and user should be targeted by only one ZIP code: their residential one.

To adopt this technique, media companies can take two transformative steps:

Use primary ZIP code targeting: Major players like Google and Meta already collect extensive user data, often appending multiple ZIP codes to a single device. For experiments, these companies should offer a “primary” zip code targeting option, based on the user’s profile or most frequently observed ZIP code for their devices.Implement anonymous registration with ZIP codes:

Publishers should require registration to access most free content, offering an “anonymous” account type that doesn’t require an email address. Users would provide a username, password and home ZIP code, enabling publishers to enhance audience profiles while maintaining user anonymity.

These strategies would significantly improve ROI measurement, offering a more powerful and simpler mechanism than cookies or other current alternatives. Unlike cookies, which were always unreliable for measuring ROI, these methods provide a privacy-centric, fraud-resistant solution that doesn’t require complex data exchanges, clean rooms, tracking pixels or user IDs.

Industry bodies like the IAB, IAB Tech Lab, MMA, ANA, MSI and CIMM should advocate for this approach, which would revolutionize advertising incrementality measurement.

With over 30,000 addressable ZIP codes compared to 210 DMAs, the potential for greater statistical power and more reliable ROI measurement is immense. As Randall Lewis, Senior Principal Economist at Amazon, told me, “the statistical power difference between user IDs and ZIP codes in intent-to-treat experiments can be small, with the right analysis methods.”

Adopting this approach would mark a significant leap forward, making high-quality experiments more accessible and reliable than ever before, ensuring a privacy-pure and fraud-proof approach to measuring advertising effectiveness.

The Future of Measuring Advertising ROI is "MPE": Models Plus Experiments

Marketing ROI measurement is going through a generational transformation right now. Following MMM (marketing/media mix models in the ‘90s), MTA (multi-touch attribution in the 2000s) now comes a new emerging best practice I call “MPE”: Models Plus Experiments.

MPE refers to a process of constant improvement to existing marketing ROI models, particularly MMM, by fine tuning model assumptions and coefficients with a practice of regular experiments for measuring incremental ROI.

The Gold Standard Is Not a Silver Bullet

Scientists consider the type of experiment known as a “randomized controlled trial” (RCT) to be the “gold standard” for measuring cause and effect, but the approach is not a silver bullet for advertisers.

Equivalent to “clinical trials” for proving efficacy in medicine – where outcomes of a test group are compared against those of a control group, where, critically, the test and control groups are assigned by a random process before the intervention of the experiment – RCT has a reputation among advertisers as being difficult to implement.

That concern is overstated, however. Running good media experiments is a lot easier than most advertising practitioners think. Industry measurement experts have made a lot of progress in the past decade, including the revolutionary ad-experimentation technique known as "ghost ads," which is widely deployed by most biggest digital media companies, generally for free. Central Control has also recently introduced a new RCT media experiment design, Rolling Thunder, which is simple to implement and can be used for many types of media by many types of advertisers.

Running a good experiment is certainly far easier than building a complex statistical model to try to explain what is driving the best ROI in the mix – an approach many advertisers spend much time and money on.

Another legitimate criticism of experiments is that it is hard to generalize what works in the mix from a single test. True enough. Experiments offer a snapshot of the effect of one campaign, at a point in time, in select media, with a given creative, a particular product offer, specific campaign targeting, and so forth. But, which of those factors mattered most to driving that lift?

In general, to assess that, you need to run more experiments.

According to the “hierarchy of evidence,” the ranking of different methods for measuring causal effect (from which comes the idea that RCT is the “gold standard”), the only practice that regularly outranks an RCT is a meta-analysis of the results from lots of RCT studies.

Think of a large set of benchmarks of advertising experiments, scored by the various factors within the control of advertisers, such as ad format type, publisher partners, media channels, and so on. Such a system of analysis is easily within reach of any large advertiser (or publisher or agency) that routinely executes lots of experiments. For marketers working in a Bayesian framework, the lifts measured by lots of similar experiments become ideal “priors” for estimating effect the effect of future campaigns.

The shrewdest advertisers are increasingly adopting this practice, dubbed “always-on experiments.”

The Best Models Are Wrong, But Useful: Experiments Make Them Better

But even such an RCT benchmark doesn’t take the place of a good model. As they say, all models are wrong, but some are useful. Models are good for the big picture, zooming in and out to different degrees of granularity about how the mix is understood to work. They provide forecasting and scenario-planning capabilities, cost/benefit trade-off planning, simple summaries for strategic planning and other merits that won’t be supplanted by practicing regular ROI experiments.

Regular experiments are, however, a critical missing factor in the ROI analysis for too many advertisers. Experiments enable analysts to make the models better by recalibrating assumptions in their models with better evidence.

That is what I mean by “Models Plus Experiments”: honing coefficients in MMM and MTA models through the practice of always-on experimentation. (And, when I say “experiments,” I specifically mean RCT.)

MediaVillage: Coca-Cola Leads Brave New World for Marketers

Nothing like this year has ever happened before. So many companies are down double digits in 2020 YOY revenues that CEOs must rapidly do something different or risk termination.

By Bill Harvey and Rick Bruner

Nothing like this year has ever happened before. So many companies are down double digits in 2020 YOY revenues that CEOs must rapidly do something different or risk termination.

Doing something different has not been Standard Operating Procedure (SOP) for a long time.

Marketing Mix Modeling (MMM) turned out to be the essential for C Suites over the past three decades in rationalizing their marketing decisions. MMM is not conducive to doing anything rapidly, nor differently. "Bayesian Priors" is a fancy name many marketing modelers use to mean "last year's MMM results", meaning that new MMM analyses are anchored to year-ago MMMs. MMM is a methodology that encourages tweaking around the edges rather than doing anything differently.

Which is not to say discard MMM. However, it is not going to be very useful right now in the crisis we are in. All of the slowly accumulated benchmarks and baselines are out the window. The 104-week gestation period is inimical to companies' survival.

Are we being dramatic? Obviously not, based on a quick study of what Coca-Cola is doing. A complete reorganization globally, 200 brands on the chopping block, the 200 brands being kept reorganized into a new reporting structure designed to "bring marketing closer to the consumer" with a new emphasis on innovation.

The NY Times quotes Coke's CEO, James Quincey, saying the company is "reassessing our overall marketing return on investment on everything from ad viewership across traditional media to improving effectiveness in digital."

Forbes: Randomized Control Testing: A Science Being Tested To Measure An Advertiser’s ROI

The ability to measure the impact advertising has on sales has been the holy grail for marketers, agencies and data scientists. It dates back to John Wanamaker (1838-1922), who has been credited with the adage, “Half the money I spend on advertising is wasted; the trouble is I don't know which half”. Since then, with more ad supported media choices and more brands, consumers have been bombarded by ad messages daily. Meanwhile, advertisers continue to seek better ways to measure the sales impact from their media campaigns.

The ability to measure the impact advertising has on sales has been the holy grail for marketers, agencies and data scientists. It dates back to John Wanamaker (1838-1922), who has been credited with the adage, “Half the money I spend on advertising is wasted; the trouble is I don't know which half”. Since then, with more ad supported media choices and more brands, consumers have been bombarded by ad messages daily. Meanwhile, advertisers continue to seek better ways to measure the sales impact from their media campaigns.

Attempting to measure the return-on-investment (ROI) of an ad campaign is not new. In the 1990s some prominent advertisers, including Procter & Gamble, began using media mix modeling (MMM) to measure the ROI of their ad campaigns. Although the media landscape was far less complex than today, MMM had several inadequacies including; not enough data points and it did not factor either the consumer journey or brand messaging. Nonetheless, MMM remained an industry fixture for many advertisers and agencies. Other marketers used recall and awareness studies as a substitute for sales to measure the advertising impact.

In July, the Advertising Research Foundation (ARF) began a new initiative called RCT21, (RCT stands for Randomized Control Testing), the latest iteration of measuring an ad campaign’s impact on sales. In the press release the ARF stated they “Will apply experimentation methods to measure incremental ROI of large ad campaigns run across multiple media channels at once, including addressable, linear TV and multiple major digital media platforms.”

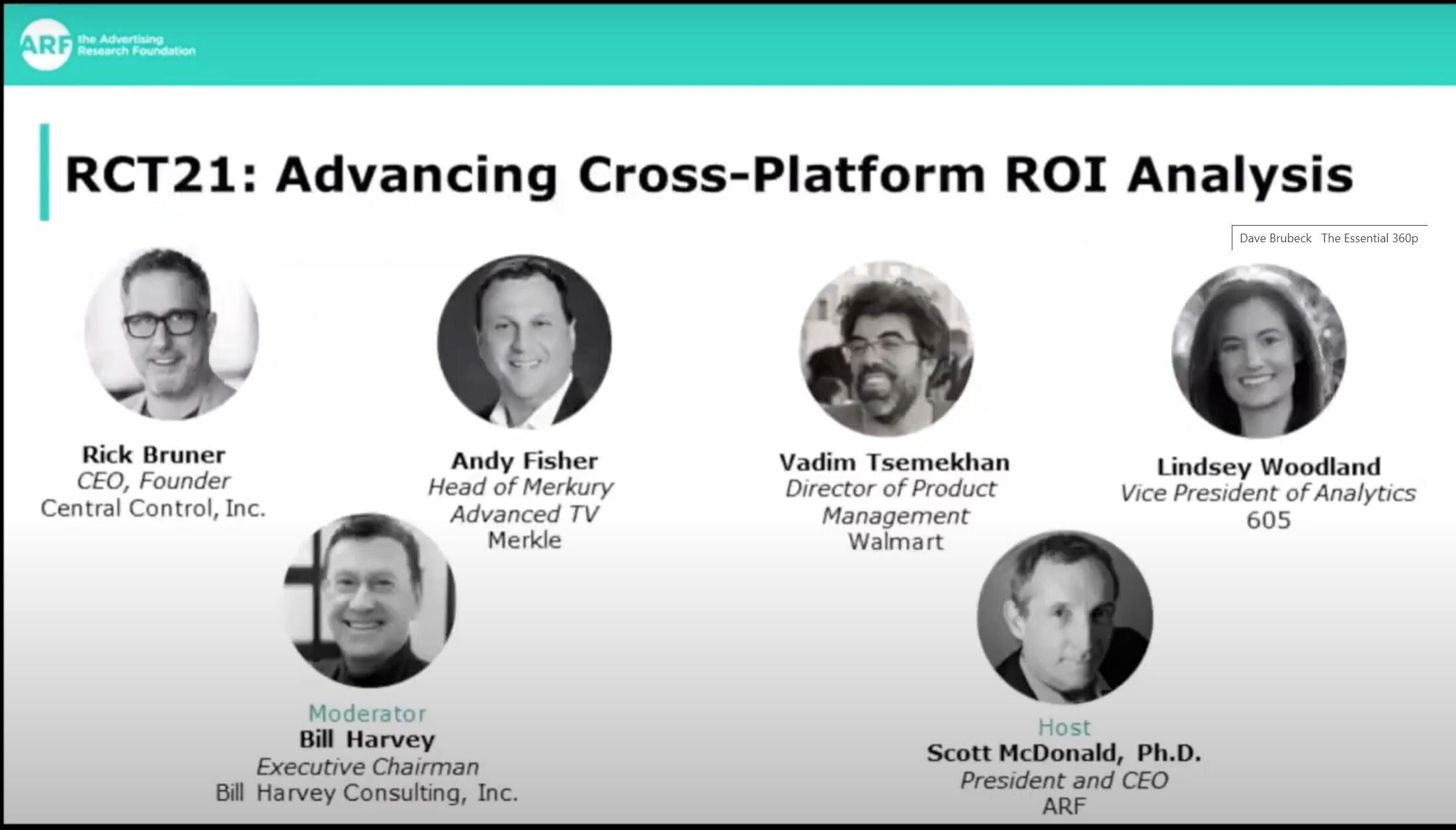

ARF Insights Studio - RCT21: Advancing Cross-Platform ROI Analysis

The ARF, in collaboration with 605, Central Control and Bill Harvey Consulting, is championing a research initiative designed to establish methods for applying randomized control testing (RCT) to cross-platform advertising impact analysis.

The ARF, in collaboration with 605, Central Control and Bill Harvey Consulting, is championing a research initiative designed to establish methods for applying randomized control testing (RCT) to cross-platform advertising impact analysis.

ARF Announces Initiative To Advance Cross-Platform ROI Analysis Through Application Of Randomized Control Trial Measurement

The ARF, in conjunction with 605, Central Control and Bill Harvey Consulting, will identify best practices to help advertisers better pinpoint the contributing factors to digital and TV campaign effectiveness

The Advertising Research Foundation (ARF), the industry leader in advertising research among brand advertisers, agencies, research firms, and media, today announced a collaborative research initiative designed to establish methods for applying randomized control testing (RCT) to cross-platform advertising impact analysis.

The ARF today announced a collaborative research initiative designed to establish methods for applying randomized control testing (RCT) to cross-platform advertising impact analysis.

ARF, New York - Businesswire, July 14

[Subheadline] The ARF, in conjunction with 605, Central Control and Bill Harvey Consulting, will identify best practices to help advertisers better pinpoint the contributing factors to digital and TV campaign effectiveness

The Advertising Research Foundation (ARF), the industry leader in advertising research among brand advertisers, agencies, research firms, and media, today announced a collaborative research initiative designed to establish methods for applying randomized control testing (RCT) to cross-platform advertising impact analysis.

The ARF today announced a collaborative research initiative designed to establish methods for applying randomized control testing (RCT) to cross-platform advertising impact analysis.

The proof-of-concept study, named RCT21, will apply experimentation methods to measure incremental ROI of large ad campaigns run across multiple media channels at once, including addressable linear TV and multiple major digital media platforms.

The ARF is conducting the research in collaboration with 605, Central Control and Bill Harvey Consulting. A select number of national advertisers are also being recruited to join in the project.

MeidaPost: 'Why The Most Important New Industry Acronym May Be RCT'

It’s been a while since I’ve been at an industry presentation where someone invoked the old chestnut -- usually attributed to Philadelphia retailer John Wanamaker -- that “half the money I spend on advertising is wasted; the trouble is I don’t know which half.” And if a new initiative backed by the Advertising Research Foundation (ARF) works out, it’s possible nobody will ever repeat it again.

The initiative, which is already underway and backed by at least two big media-buying organizations -- Horizon Media and Dentsu Aegis Network’s Merkle -- seeks to operationalize a more scientific method of proving which ads and media exposures are not wasted.

It’s been a while since I’ve been at an industry presentation where someone invoked the old chestnut -- usually attributed to Philadelphia retailer John Wanamaker -- that “half the money I spend on advertising is wasted; the trouble is I don’t know which half.” And if a new initiative backed by the Advertising Research Foundation (ARF) works out, it’s possible nobody will ever repeat it again.

The initiative, which is already underway and backed by at least two big media-buying organizations -- Horizon Media and Dentsu Aegis Network’s Merkle -- seeks to operationalize a more scientific method of proving which ads and media exposures are not wasted.

The method, known as “randomized control testing” -- or what will likely become the acronym du jour on Madison Avenue for the next several years, RCT -- goes beyond classic marketing and/or media mix modeling and so-called attribution systems, to scientifically correlate and measure the effect of actual advertising.

“This really gets to the heart of the business of advertising and the job of the marketer,” Rick Bruner explained to me during a “pre-brief” of the project, which is being unveiled today.

Did Your Ads Work? From Simple Attribution to Ghost Ads

Industry experts Ari Paparo (CEO, Beeswax) and Rick Bruner (CEO, Central Control) give an educational overview of digital attribution techniques. This webinar starts with the basics of attribution and multitouch attribution (MTA), then delve into more complex topics such as the challenges presented by cookie instability, channel complexity and more, along with a dive into the new trend of incrementality and the breakthrough "ghost ads" methodology.

Industry experts Ari Paparo (CEO, Beeswax) and Rick Bruner (CEO, Central Control) give an educational overview of digital attribution techniques. This webinar starts with the basics of attribution and multitouch attribution (MTA), then delve into more complex topics such as the challenges presented by cookie instability, channel complexity and more, along with a dive into the new trend of incrementality and the breakthrough "ghost ads" methodology.